【LLM】金融场景的大模型Lora微调实战

金融行业需要垂直领域LLM,因为存在金融安全和数据大多数存储在本地,在风控、精度、实时性有要求(1)500亿参数的BloombergGPTBloombergGPT金融大模型也是用transformer架构,用decoder路线, 构建目前规模最大的金融数据集FINPILE,对通用文本+金融知识的混合训练。用了512块40GB的A100 GPU,训练中备份了4个模型,每个模型分了128块GPU。(2

文章目录

一、金融大模型背景

- 金融行业需要垂直领域LLM,因为存在金融安全和数据大多数存储在本地,在风控、精度、实时性有要求

- (1)500亿参数的BloombergGPT

- BloombergGPT金融大模型也是用transformer架构,用decoder路线, 构建目前规模最大的金融数据集FINPILE,对通用文本+金融知识的混合训练。

- 用了512块40GB的A100 GPU,训练中备份了4个模型,每个模型分了128块GPU。

- (2)度小满5月的【源轩大模型】

- 使用hybrid-tuning方式,首个千亿参数金融大模型

- 在通用能力评测中,轩辕有10.2%的任务表现超越ChatGPT 3.5, 61.22%的任务表现与之持平,涉及数学计算、场景写作、逻辑推理、文本摘要等13个主要维度。

- 金融大模型GPT落地场景:

- 新闻情感分类 ——> 金融机构判断对某事件看法,辅助量化策略、投资决策

- 财务类知识问答 ——> 辅助金融机构进行信用评估,筛选概念股,辅助分析师对专业领域的学习

- 财务报表分析和会计稽查 ——> 生成财务分析报告和招股书,辅助会计和审计

二、大模型的研究问题

- LLM的理论基础:

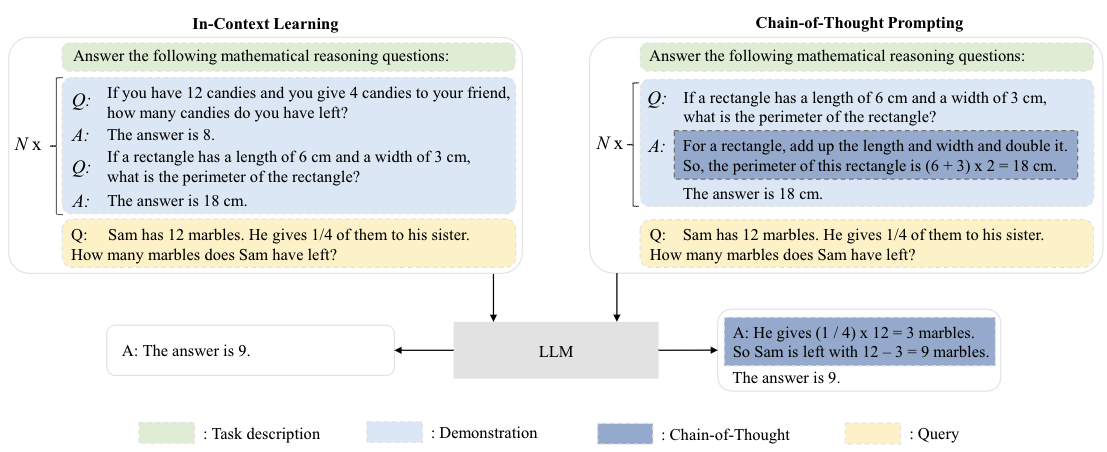

- 如Few/Zero-Shot Learning、In-Context Learning、Chain-of-Thought能力;

- zero-shot是模型训练中没有接触过这个类别的样本,但仍能对没见过的类别进行分类;few-shot是每个类别中只有少量样本,希望模型学习一定类别的大量数据后,对于新类别的少量样本数据能快速学习。few-show是meta-learning的一种。

- 网络架构:transformer架构,括分词、归一化方法、归一化位置、位置编码、注意力与偏置等常见模块。是否有比transformer更好的架构,如有学者受到数学相关方向的启发,提出非欧空间Manifold网络框架。

- 大模型的高效计算:模型并行、tensor卸载、优化器卸载等,微软的deepspeed等工具

- 推理效率:模型剪枝、知识蒸馏、参数量化等

- 大模型的高效适配下游任务:

- prompt learning提示学习:如指令微调

- 参数高效微调:只调整大模型里的少量参数

- 大模型的可控生成:通过指令微调、提示工程、思维链、RLHF等控制模型生成

- 伦理问题:RLHF、RLAIF等对齐方法提高生成质量

- 模型评估:专业考题进行评测、更强的模型给小模型打分、人工评测等

三、大模型技术路线

- Hugging Face 的 PEFT是一个库(LoRA 是其支持的技术之一,除此之外还有Prefix Tuning、P-Tuning、Prompt Tuning),可以让你使用各种基于 Transformer 结构的语言模型进行高效微调。

- AIpaca羊驼:让 OpenAI 的 text-davinci-003 模型以 self-instruct 方式生成 52K 指令遵循(instruction-following)样本,以此作为 Alpaca 的训练数据,最后训练的羊驼只有7B参数量。可以使用LoRA微调优化。

- LLM技术思路:

- 语言模型:llama、bloom、glm等

- 指令微调数据:alpaca_data、bella_data、guanaco_data等。目前指令微调数据上,很依赖alpaca以及chatgpt的self-instruct数据。数据处理参考上图

- 微调加速: lora(如Alpaca-Lora)等,还可以使用peft库、量化工具包bitsandbytes、deepspeed(先读

torch.distributed和ColossalAI再搞)、llama.cpp量化模型。在LoRA方法提出之前,也有很多方法尝试解决大模型微调困境的方法。其中有两个主要的方向:- 添加adapter层。adapter就是固定原有的参数,并添加一些额外参数用于微调;

- 由于某种形式的输入层激活。

- 训练优化方法:量化、3D并行、cpu卸载

四、LLaMA家族模型

五、Lora模型微调的原理

- prompt的本质是参数有效性学习(parameter-efficient learning, PEL),因为PLM全量参数更新训练耗时,而在参数有效性学习中,大模型只需指定或额外加入少量的可训练参数,冻结其他参数,提高训练效率和保证质量

- Lora低秩自适应,low-rank adaption,额外引入了可训练的低秩分解矩阵,同时固定预训练权重。通过反向传播学习分解的矩阵,将适应任务的新权重矩阵分解为低维(较小)矩阵,而不会丢失太多信息。

- 可以将新的lora权重矩阵与原始预训练权重合并,在推理中不会产生额外的开销;如上图所示,左边是预训练模型的权重,输入输出维度都是d,在训练时被冻结参数,右边对A使用随机的高斯初始化,B在训练初始为0。一个预训练的权重矩阵,使用低秩分解来表示,初始时△W=BA: h = W 0 x + Δ W x = W 0 x + B A x h=W_0 x+\Delta W x=W_0 x+B A x h=W0x+ΔWx=W0x+BAx

- LoRA原理:即在大型语言模型上对指定参数增加额外的低秩矩阵,并在模型训练过程中,仅训练而外增加的参数。当“秩值”远小于原始参数维度时,新增的低秩矩阵参数量很小,达到仅训练很小的参数,就能获取相应结果。

- 冻结预训练模型权重,并将可训练的秩分解矩阵注入到Transformer层的每个权重中,大大减少了下游任务的可训练参数数量。实际上是增加了右侧的“旁支”,也就是先用一个Linear层A,将数据从 d维降到r,再用第二个Linear层B,将数据从r变回d维。最后再将左右两部分的结果相加融合,得到输出的

hidden_state。

- 评价LLM生成文本的指标:困惑度、BLEU 和 ROUGE等

- Alpaca-Lora:基于LLaMA(7B)微调

项目链接:https://github.com/tloen/alpaca-lora

权重地址:https://huggingface.co/decapoda-research/llama-7b-hf- 项目诞生原因:Stanford Alpaca羊驼 是在 LLaMA 整个模型上微调,即对预训练模型中的所有参数都进行微调(full fine-tuning)。但该方法对于硬件成本要求仍然偏高且训练低效。LLaMA没有经过指令微调,生成效果较差

- 因此,Alpaca-Lora:利用 Lora 技术,在冻结原模型 LLaMA 参数的情况下,通过往模型中加入额外的网络层,并只训练这些新增的网络层参数。由于这些新增参数数量较少,这样不仅微调的成本显著下降(使用一块 RTX 4090 显卡,只用 5 个小时就训练了一个与 Alpaca 水平相当的模型,将这类模型对算力的需求降到了消费级),还能获得和全模型微调(full fine-tuning)类似的效果。

- 将LLaMA原始转钟转为transformers库对应的模型文件格式(也可以直接从huggingface上下载转好的模型,参考)

- 用LoRA(Low-rank Adaptation)微调模型、模型推理

- 将 LoRA 权重合并回基础模型以导出为 HuggingFace 格式和 PyTorch state_dicts。以帮助想要在 llama.cpp 或 alpaca.cpp 等项目中运行推理的用户

六、基于mt0-large进行Lora微调实战

- 下面以mt0-large模型进行lora为例:

- 选用金融领域情感分析任务

financial_sentiment_analysis,给定一个句子,要求识别出该句子是negative、positive还是neutral三个中的哪一个

next(iter(train_dataloader)).keys()

Out[2]: dict_keys(['input_ids', 'attention_mask', 'labels'])

# train_dataset.data如下所示

input_ids: [[[486,7834,304,259,35610,...,0,0,0,0,0],[259,229832,259,277,263,...,0,0,0,0,0],...,[259,96890,259,5330,259,...,0,0,0,0,0],[486,5835,259,39509,259,...,0,0,0,0,0]],[[1494,1546,259,69541,259,...,0,0,0,0,0],[486,7495,13159,339,2847,...,0,0,0,0,0],...,[20871,72726,702,92223,332,...,0,0,0,0,0],[486,584,193394,347,11470,...,0,0,0,0,0]],[[274,298,259,62434,263,...,0,0,0,0,0],[1477,514,1904,259,263,...,0,0,0,0,0],...,[143129,268,259,277,263,...,0,0,0,0,0],[35446,339,31499,285,288,...,0,0,0,0,0]]]

attention_mask: [[[1,1,1,1,1,...,0,0,0,0,0],[1,1,1,1,1,...,0,0,0,0,0],...,[1,1,1,1,1,...,0,0,0,0,0],[1,1,1,1,1,...,0,0,0,0,0]],[[1,1,1,1,1,...,0,0,0,0,0],[1,1,1,1,1,...,0,0,0,0,0],...,[1,1,1,1,1,...,0,0,0,0,0],[1,1,1,1,1,...,0,0,0,0,0]],[[1,1,1,1,1,...,0,0,0,0,0],[1,1,1,1,1,...,0,0,0,0,0],...,[1,1,1,1,1,...,0,0,0,0,0],[1,1,1,1,1,...,0,0,0,0,0]]]

labels: [[[59006,1,-100],[59006,1,-100],...,[59006,1,-100],[59006,1,-100]],[[18205,1,-100],[59006,1,-100],...,[259,32588,1],[18205,1,-100]],[[59006,1,-100],[59006,1,-100],...,[59006,1,-100],[59006,1,-100]]]

- 下面借助

peft库(Parameter-Efficient Fine-Tuning)进行微调,支持如下tuning:- Adapter Tuning(固定原预训练模型的参数 只对新增的adapter进行微调)

- Prefix Tuning(在输入token前构造一段任务相关的virtual tokens作为prefix,训练时只更新Prefix不分的参数,而Transformer的其他不分参数固定,和构造prompt类似,只是prompt是人为构造的即无法在模型训练时更新参数,而Prefix可以学习<隐式>的prompt)

- Prompt Tuning(Prefix Tuning的简化版,只在输入层加入prompt tokens,并不需要加入MLP)

- P-tuning(将prompt转为可学习的embedding层,v2则加入了prompts tokens作为输入)

- LoRA(Low-Rank Adaption,为了解决adapter增加模型深度而增加模型推理时间、上面几种tuning中prompt较难训练,减少模型的可用序列长度)

- 该方法可以在推理时直接用训练好的AB两个矩阵和原预训练模型的参数相加,相加结果替换原预训练模型参数。

- 相当于用LoRA模拟full-tunetune过程

# !/usr/bin/python

# -*- coding: utf-8 -*-

"""

@Author : guomiansheng

@Software : Pycharm

@Contact : 864934027@qq.com

@File : main.py

"""

from transformers import AutoModelForSeq2SeqLM

from peft import get_peft_config, get_peft_model, get_peft_model_state_dict, LoraConfig, TaskType

import torch

from datasets import load_dataset

import os

os.environ["TOKENIZERS_PARALLELISM"] = "false"

from transformers import AutoTokenizer

from torch.utils.data import DataLoader

from transformers import default_data_collator, get_linear_schedule_with_warmup

from tqdm import tqdm

from datasets import load_dataset

def train_model():

# device = "cuda"

device = "mps"

model_name_or_path = "bigscience/mt0-large"

tokenizer_name_or_path = "bigscience/mt0-large"

checkpoint_name = "financial_sentiment_analysis_lora_v1.pt"

text_column = "sentence"

label_column = "text_label"

max_length = 128

lr = 1e-3

num_epochs = 3

batch_size = 8

# 搭建model

peft_config = LoraConfig(task_type=TaskType.SEQ_2_SEQ_LM, inference_mode=False, r=8, lora_alpha=32,

lora_dropout=0.1)

model = AutoModelForSeq2SeqLM.from_pretrained(model_name_or_path)

model = get_peft_model(model, peft_config)

model.print_trainable_parameters()

# 加载数据

dataset = load_dataset("financial_phrasebank", "sentences_allagree")

dataset = dataset["train"].train_test_split(test_size=0.1)

dataset["validation"] = dataset["test"]

del dataset["test"]

classes = dataset["train"].features["label"].names

dataset = dataset.map(

lambda x: {"text_label": [classes[label] for label in x["label"]]},

batched=True,

num_proc=1,

)

# 训练数据预处理

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path)

def preprocess_function(examples):

inputs = examples[text_column]

targets = examples[label_column]

model_inputs = tokenizer(inputs, max_length=max_length, padding="max_length", truncation=True,

return_tensors="pt")

labels = tokenizer(targets, max_length=3, padding="max_length", truncation=True, return_tensors="pt")

labels = labels["input_ids"]

labels[labels == tokenizer.pad_token_id] = -100

model_inputs["labels"] = labels

return model_inputs

processed_datasets = dataset.map(

preprocess_function,

batched=True,

num_proc=1,

remove_columns=dataset["train"].column_names,

load_from_cache_file=False,

desc="Running tokenizer on dataset",

)

train_dataset = processed_datasets["train"]

eval_dataset = processed_datasets["validation"]

train_dataloader = DataLoader(

train_dataset, shuffle=True, collate_fn=default_data_collator, batch_size=batch_size, pin_memory=True

)

eval_dataloader = DataLoader(eval_dataset, collate_fn=default_data_collator, batch_size=batch_size, pin_memory=True)

# 设定优化器和正则项

optimizer = torch.optim.AdamW(model.parameters(), lr=lr)

lr_scheduler = get_linear_schedule_with_warmup(

optimizer=optimizer,

num_warmup_steps=0,

num_training_steps=(len(train_dataloader) * num_epochs),

)

# 训练和评估

model = model.to(device)

for epoch in range(num_epochs):

model.train()

total_loss = 0

for step, batch in enumerate(tqdm(train_dataloader)):

batch = {k: v.to(device) for k, v in batch.items()}

outputs = model(**batch)

loss = outputs.loss

total_loss += loss.detach().float()

loss.backward()

optimizer.step()

lr_scheduler.step()

optimizer.zero_grad()

model.eval()

eval_loss = 0

eval_preds = []

for step, batch in enumerate(tqdm(eval_dataloader)):

batch = {k: v.to(device) for k, v in batch.items()}

with torch.no_grad():

outputs = model(**batch)

loss = outputs.loss

eval_loss += loss.detach().float()

eval_preds.extend(

tokenizer.batch_decode(torch.argmax(outputs.logits, -1).detach().cpu().numpy(),

skip_special_tokens=True)

)

eval_epoch_loss = eval_loss / len(eval_dataloader)

eval_ppl = torch.exp(eval_epoch_loss)

train_epoch_loss = total_loss / len(train_dataloader)

train_ppl = torch.exp(train_epoch_loss)

print(f"{epoch=}: {train_ppl=} {train_epoch_loss=} {eval_ppl=} {eval_epoch_loss=}")

# 保存模型

peft_model_id = f"{model_name_or_path}_{peft_config.peft_type}_{peft_config.task_type}"

model.save_pretrained(peft_model_id)

def inference_model():

# device = "cuda"

device = "mps"

model_name_or_path = "bigscience/mt0-large"

tokenizer_name_or_path = "bigscience/mt0-large"

checkpoint_name = "financial_sentiment_analysis_lora_v1.pt"

text_column = "sentence"

label_column = "text_label"

max_length = 128

lr = 1e-3

num_epochs = 3

batch_size = 8

# 搭建model

peft_config = LoraConfig(task_type=TaskType.SEQ_2_SEQ_LM, inference_mode=False, r=8, lora_alpha=32,

lora_dropout=0.1)

model = AutoModelForSeq2SeqLM.from_pretrained(model_name_or_path)

model = get_peft_model(model, peft_config)

model.print_trainable_parameters()

# 加载数据

dataset = load_dataset("financial_phrasebank", "sentences_allagree")

dataset = dataset["train"].train_test_split(test_size=0.1)

dataset["validation"] = dataset["test"]

del dataset["test"]

classes = dataset["train"].features["label"].names

dataset = dataset.map(

lambda x: {"text_label": [classes[label] for label in x["label"]]},

batched=True,

num_proc=1,

)

# 训练数据预处理

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path)

def preprocess_function(examples):

inputs = examples[text_column]

targets = examples[label_column]

model_inputs = tokenizer(inputs, max_length=max_length, padding="max_length", truncation=True,

return_tensors="pt")

labels = tokenizer(targets, max_length=3, padding="max_length", truncation=True, return_tensors="pt")

labels = labels["input_ids"]

labels[labels == tokenizer.pad_token_id] = -100

model_inputs["labels"] = labels

return model_inputs

processed_datasets = dataset.map(

preprocess_function,

batched=True,

num_proc=1,

remove_columns=dataset["train"].column_names,

load_from_cache_file=False,

desc="Running tokenizer on dataset",

)

train_dataset = processed_datasets["train"]

eval_dataset = processed_datasets["validation"]

train_dataloader = DataLoader(

train_dataset, shuffle=True, collate_fn=default_data_collator, batch_size=batch_size, pin_memory=True

)

eval_dataloader = DataLoader(eval_dataset, collate_fn=default_data_collator, batch_size=batch_size, pin_memory=True)

# 设定优化器和正则项

optimizer = torch.optim.AdamW(model.parameters(), lr=lr)

lr_scheduler = get_linear_schedule_with_warmup(

optimizer=optimizer,

num_warmup_steps=0,

num_training_steps=(len(train_dataloader) * num_epochs),

)

# 训练和评估

model = model.to(device)

# 模型推理预测

from peft import PeftModel, PeftConfig

peft_model_id = f"{model_name_or_path}_{peft_config.peft_type}_{peft_config.task_type}"

config = PeftConfig.from_pretrained(peft_model_id)

model = AutoModelForSeq2SeqLM.from_pretrained(config.base_model_name_or_path)

model = PeftModel.from_pretrained(model, peft_model_id)

model.eval()

i = 0

inputs = tokenizer(dataset["validation"][text_column][i], return_tensors="pt")

print(dataset["validation"][text_column][i])

print(inputs)

with torch.no_grad():

outputs = model.generate(input_ids=inputs["input_ids"], max_new_tokens=10)

print(outputs)

print(tokenizer.batch_decode(outputs.detach().cpu().numpy(), skip_special_tokens=True))

print("=============test=============")

if __name__ == '__main__':

# train_model()

inference_model()

可以看到上面的LoraConfig参数如下:

peft_config = LoraConfig(task_type=TaskType.SEQ_2_SEQ_LM,

inference_mode=False,

r=8,

lora_alpha=32,

lora_dropout=0.1)

task_type:任务类型:

class TaskType(str, enum.Enum):

SEQ_CLS = "SEQ_CLS" 常规分类任务

SEQ_2_SEQ_LM = "SEQ_2_SEQ_LM" seq2seq任务

CAUSAL_LM = "CAUSAL_LM" LM任务

TOKEN_CLS = "TOKEN_CLS" token的分类任务:序列标注之类的

inference_mode:r:lora的秩;lora_A用高斯分布初始化,lora_B用0初始化lora_alpha:lora微调的缩放系数lora_dropout:lora微调的dropout系数learning_rate:adamw优化器的初始学习速率

也可以看LoraConfig类的定义中的属性:

class LoraConfig(PeftConfig):

r: int = field(default=8, metadata={"help": "Lora attention dimension"})

target_modules: Optional[Union[List[str], str]] = field(

default=None,

metadata={

"help": "List of module names or regex expression of the module names to replace with Lora."

"For example, ['q', 'v'] or '.*decoder.*(SelfAttention|EncDecAttention).*(q|v)$' "

},

)

lora_alpha: int = field(default=None, metadata={"help": "Lora alpha"})

lora_dropout: float = field(default=None, metadata={"help": "Lora dropout"})

fan_in_fan_out: bool = field(

default=False,

metadata={"help": "Set this to True if the layer to replace stores weight like (fan_in, fan_out)"},

)

bias: str = field(default="none", metadata={"help": "Bias type for Lora. Can be 'none', 'all' or 'lora_only'"})

modules_to_save: Optional[List[str]] = field(

default=None,

metadata={

"help": "List of modules apart from LoRA layers to be set as trainable and saved in the final checkpoint. "

"For example, in Sequence Classification or Token Classification tasks, "

"the final layer `classifier/score` are randomly initialized and as such need to be trainable and saved."

},

)

init_lora_weights: bool = field(

default=True,

metadata={"help": "Whether to initialize the weights of the Lora layers."},

)

def __post_init__(self):

self.peft_type = PeftType.LORA

- r (

int): Lora attention dimension. - target_modules (

Union[List[str],str]): The names of the modules to apply Lora to. - lora_alpha (

float): The alpha parameter for Lora scaling. - lora_dropout (

float): The dropout probability for Lora layers. - fan_in_fan_out (

bool): Set this to True if the layer to replace stores weight like (fan_in, fan_out).- For example, gpt-2 uses

Conv1Dwhich stores weights like (fan_in, fan_out) and hence this should be set toTrue.:

- For example, gpt-2 uses

- bias (

str): Bias type for Lora. Can be ‘none’, ‘all’ or ‘lora_only’ - modules_to_save (

List[str]):List of modules apart from LoRA layers to be set as trainable

and saved in the final checkpoint.

具体Lora_layer层的定义如下,lora是在自定义的embedding类中执行的(自定义embedding类,继承nn.embedding和loralayer类)

class LoraLayer:

def __init__(

self,

in_features: int,

out_features: int,

):

self.r = {}

self.lora_alpha = {}

self.scaling = {}

self.lora_dropout = nn.ModuleDict({})

self.lora_A = nn.ModuleDict({})

self.lora_B = nn.ModuleDict({})

# For Embedding layer

self.lora_embedding_A = nn.ParameterDict({})

self.lora_embedding_B = nn.ParameterDict({})

# Mark the weight as unmerged

self.merged = False

self.disable_adapters = False

self.in_features = in_features

self.out_features = out_features

def update_layer(self, adapter_name, r, lora_alpha, lora_dropout, init_lora_weights):

self.r[adapter_name] = r

self.lora_alpha[adapter_name] = lora_alpha

if lora_dropout > 0.0:

lora_dropout_layer = nn.Dropout(p=lora_dropout)

else:

lora_dropout_layer = nn.Identity()

self.lora_dropout.update(nn.ModuleDict({adapter_name: lora_dropout_layer}))

# Actual trainable parameters

if r > 0:

self.lora_A.update(nn.ModuleDict({adapter_name: nn.Linear(self.in_features, r, bias=False)}))

self.lora_B.update(nn.ModuleDict({adapter_name: nn.Linear(r, self.out_features, bias=False)}))

self.scaling[adapter_name] = lora_alpha / r

if init_lora_weights:

self.reset_lora_parameters(adapter_name)

self.to(self.weight.device)

def update_layer_embedding(self, adapter_name, r, lora_alpha, lora_dropout, init_lora_weights):

self.r[adapter_name] = r

self.lora_alpha[adapter_name] = lora_alpha

if lora_dropout > 0.0:

lora_dropout_layer = nn.Dropout(p=lora_dropout)

else:

lora_dropout_layer = nn.Identity()

self.lora_dropout.update(nn.ModuleDict({adapter_name: lora_dropout_layer}))

# Actual trainable parameters

if r > 0:

self.lora_embedding_A.update(

nn.ParameterDict({adapter_name: nn.Parameter(self.weight.new_zeros((r, self.in_features)))})

)

self.lora_embedding_B.update(

nn.ParameterDict({adapter_name: nn.Parameter(self.weight.new_zeros((self.out_features, r)))})

)

self.scaling[adapter_name] = lora_alpha / r

if init_lora_weights:

self.reset_lora_parameters(adapter_name)

self.to(self.weight.device)

def reset_lora_parameters(self, adapter_name):

if adapter_name in self.lora_A.keys():

# initialize A the same way as the default for nn.Linear and B to zero

nn.init.kaiming_uniform_(self.lora_A[adapter_name].weight, a=math.sqrt(5))

nn.init.zeros_(self.lora_B[adapter_name].weight)

if adapter_name in self.lora_embedding_A.keys():

# initialize a the same way as the default for nn.linear and b to zero

nn.init.zeros_(self.lora_embedding_A[adapter_name])

nn.init.normal_(self.lora_embedding_B[adapter_name])

七、对chatglm2进行lora微调

lora微调后需要将lora权重和原始模型权重合并。

from transformers import AutoTokenizer, AutoModel, AutoConfig

from peft import PeftModel, PeftConfig

# lora tuning

model_name_or_path = "../model/chatglm2-6b"

# 训练后的lora保存的路径

peft_model_id = "../chatglm_v2_6b_lora/output/checkpoint-3000"

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, trust_remote_code=True)

model = AutoModel.from_pretrained(model_name_or_path, trust_remote_code=True, device_map='auto',

torch_dtype=torch.bfloat16).to("cuda") # .half().cuda()

model = PeftModel.from_pretrained(model, peft_model_id)

model = model.eval()

# 多显卡支持,使用下面两行代替上面一行,将num_gpus改为你实际的显卡数量

# from utils import load_model_on_gpus

# model = load_model_on_gpus("THUDM/chatglm2-6b", num_gpus=2)

# model = model.eval()

这里进行lora微调的效果是比官方的p-tuning v2效果好的,并且没有出现严重的知识遗忘特点,比如问题你是谁,他能回答自己是chatglm blabla。

用户:你是谁

你是谁

ChatGLM:我是一个名为 ChatGLM2-6B 的人工智能助手,是基于清华大学 KEG 实验室和智谱 AI 公司于 2023 年共同训练的语言模型开发的。我的任务是针对用户的问题和要求提供适当的答复和支持。

用户:类型#裙*版型#显瘦*风格#文艺*风格#简约*图案#印花*图案#撞色*裙下摆#压褶*裙长#连衣裙*裙领型#圆领

��#撞色*裙下摆#压褶*裙长#连衣裙*裙领型#圆领

ChatGLM:连衣裙简约的设计,采用撞色的印花图案,让整体显得很有文艺气息,又很显瘦。

小圆领的设计,简单大方,又很有气质。压褶的设计,让裙子看起来很有层次感,又很有质感。

可以和p-tuning v2对比刚才广告词生成的结果,p-tuning v2应该是在这种垂直领域更好一丢丢,比如对于长款裙还会描述遮住腿型,还描述文艺风格 满足你XX的气质吸引顾客。

用户:类型#裙*版型#显瘦*风格#文艺*风格#简约*图案#印花*图案#撞色*裙下摆#压褶*裙长#连衣裙*裙领型#圆领

��#撞色*裙下摆#压褶*裙长#连衣裙*裙领型#圆领

ChatGLM:这条裙子非常百搭,可以搭配各种不同的上衣,也可以作为连衣裙来穿。

它的版型比较有型,可以显瘦,而且它属于比较文艺的风格,可以满足你的小女人的气质。

这条裙子的风格比较简约,不繁杂,适合各种不同的搭配。它属于长款裙,可以遮住你的腿型,让你看起来更加高挑。

注意:将lora微调训练的权重、base model权重合可以参考merge_peft_adapter.py如下

# -*- coding: utf-8 -*-

"""

Usage:

python merge_peft_adapter.py \

--base_model_name_or_path path/to/llama/model \

--tokenizer_path path/to/llama/tokenizer \

--peft_model_path path/to/lora/model \

--output_dir path/to/output/dir

after merged, chatglm and baichuan model need copy python script to output dir.

"""

import argparse

import torch

from peft import PeftModel, PeftConfig

from transformers import (

AutoModel,

AutoTokenizer,

BloomForCausalLM,

BloomTokenizerFast,

AutoModelForCausalLM,

LlamaTokenizer,

LlamaForCausalLM,

AutoModelForSequenceClassification,

)

MODEL_CLASSES = {

"bloom": (BloomForCausalLM, BloomTokenizerFast),

"chatglm": (AutoModel, AutoTokenizer),

"llama": (LlamaForCausalLM, LlamaTokenizer),

"baichuan": (AutoModelForCausalLM, AutoTokenizer),

"auto": (AutoModelForCausalLM, AutoTokenizer),

}

def main():

parser = argparse.ArgumentParser()

parser.add_argument('--model_type', default=None, type=str, required=True)

parser.add_argument('--base_model_name_or_path', default=None, required=True, type=str,

help="Base model name or path")

parser.add_argument('--tokenizer_path', default=None, type=str,

help="Please specify tokenization path.")

parser.add_argument('--peft_model_path', default=None, required=True, type=str,

help="Please specify LoRA model to be merged.")

parser.add_argument('--resize_emb', action='store_true', help='Whether to resize model token embeddings')

parser.add_argument('--output_dir', default='./merged', type=str)

args = parser.parse_args()

print(args)

base_model_path = args.base_model_name_or_path

peft_model_path = args.peft_model_path

output_dir = args.output_dir

print(f"Base model: {base_model_path}")

print(f"LoRA model: {peft_model_path}")

peft_config = PeftConfig.from_pretrained(peft_model_path)

model_class, tokenizer_class = MODEL_CLASSES[args.model_type]

if peft_config.task_type == "SEQ_CLS":

print("Loading LoRA for sequence classification model")

if args.model_type == "chatglm":

raise ValueError("chatglm does not support sequence classification")

base_model = AutoModelForSequenceClassification.from_pretrained(

base_model_path,

load_in_8bit=False,

torch_dtype=torch.float16,

trust_remote_code=True,

device_map="auto",

)

else:

print("Loading LoRA for causal language model")

base_model = model_class.from_pretrained(

base_model_path,

load_in_8bit=False,

torch_dtype=torch.float16,

trust_remote_code=True,

device_map="auto",

)

if args.tokenizer_path:

tokenizer = tokenizer_class.from_pretrained(args.tokenizer_path, trust_remote_code=True)

else:

tokenizer = tokenizer_class.from_pretrained(peft_model_path, trust_remote_code=True)

if args.resize_emb:

base_model_token_size = base_model.get_input_embeddings().weight.size(0)

if base_model_token_size != len(tokenizer):

base_model.resize_token_embeddings(len(tokenizer))

print(f"Resize vocabulary size {base_model_token_size} to {len(tokenizer)}")

lora_model = PeftModel.from_pretrained(

base_model,

peft_model_path,

device_map="auto",

torch_dtype=torch.float16,

)

lora_model.eval()

print(f"Merging with merge_and_unload...")

base_model = lora_model.merge_and_unload()

print("Saving to Hugging Face format...")

tokenizer.save_pretrained(output_dir)

base_model.save_pretrained(output_dir)

print(f"Done! model saved to {output_dir}")

if __name__ == '__main__':

main()

八、lora微调还是全参微调

一般全参会比lora微调好:https://github.com/huggingface/peft/issues/622

论文:《A Comparative Study between Full-Parameter and LoRA-basedFine-Tuning on Chinese Instruction Data for Instruction Following LargeLanguage Mode》:

- FT效果好于lora微调。M是百万条。FT是lora时间的3-5倍。

- 第三行是增量微调,效果更好

实验细节(评估模型使用GPT进行打分):

Reference

[1] A Survey of Large Language Models. Wayne Xin Zhao

[2] 大模型论文综述介绍

[3] LLaMA类模型没那么难,LoRA将模型微调缩减到几小时

[4] RLHF中的PPO算法原理及其实现

[5] 基于DeepSpeed训练ChatGPT

[6] Prompt-Tuning——深度解读一种新的微调范式

[7] 大模型参数高效微调技术原理综述(七)-最佳实践、总结

[8] chatGLM2-6B模型的全参数微调(改进多轮对话交互质量等):https://github.com/SpongebBob/Finetune-ChatGLM2-6B

[9] 大模型微调样本构造的trick

[10] 大模型参数高效微调技术原理综述(一)-背景、参数高效微调简介(附全量参数微调与参数高效微调对比-表格)

[11] 大模型训练之微调篇.无数据不智能

[12] 理解金融报告:使用大模型.无数据不智能

[13] Scaling Down to Scale Up: A Guide to Parameter-Efficient Fine-Tuning

[14] 低资源微调大模型:LoRA模型思想与BLOOM-LORA代码实现分析

[15] 模型和指令微调方法.山顶夕景

[16] 详谈大模型训练和推理优化技术

[17] LLM+LoRa微调加速技术原理及基于PEFT的动手实践:一些思考和mt0-large+lora完整案例

[18] 再看大模型Lora微调加速是否有效:Full-Parameter全参数微调与LoRA低秩微调的性能对比开源实验介绍

[19] 微调范式对比Freeze、P-Tuning、Lora、full-Finetune开源实现

[20] 基于GLM-6B对话模型的实体属性抽取项目实现解析:对Zero-shot与In-Context Learning的若干思考

[21] 微调实战:DeepSpeed+Transformers实现简单快捷上手百亿参数模型微调

[22] LLaMA:小参数+大数据的开放、高效基础语言模型阅读笔记

[23] 代码角度看LLaMA语言模型

[24] ChatGPT应用端的Prompt解析:从概念、基本构成、常见任务、构造策略到开源工具与数据集

[25] LLM实战:大语言模型BLOOM推理工具测试实践与效果分析实录

[26] 谈langchain大模型外挂知识库问答系统核心部件:如何更好地解析、分割复杂非结构化文本

[27] 看支持32K上下文的ChatGLM2-6B模型:优化点简读及现有开源模型主流训练优化点概述

[28] 极低资源条件下如何微调大模型:LoRA模型思想与BLOOM-LORA代码实现分析

[29] The Power of Scale for Parameter-Efficient Prompt Tuning

[30] https://github.com/mymusise/ChatGLM-Tuning

一种平价的 Chatgpt 实现方案,基于清华的ChatGLM-6B+ LoRA 进行finetune

[31] https://github.com/jxhe/unify-parameter-efficient-tuning

[31] 简单分析LoRA方法

[32] financial_phrasebank dataset.huggingface

[33] GPT大语言模型Alpaca-lora本地化部署实践.某东技术

[34] 金融大模型:FinGLM : github.com/MetaGLM/FinGLM

[35] Fine-Tuning LLMs: LoRA or Full-Parameter? An in-depth Analysis with Llama 2

更多推荐

已为社区贡献3条内容

已为社区贡献3条内容

所有评论(0)